Porting a Code RAG system from Python to Go: What the AI got wrong

Why we rewrote Kodit from Python to Go, what broke along the way, and what the new version means for users and integrators.

Kodit started as a Python project. An MCP server and CLI for indexing code repositories, combining BM25 keyword search with vector embeddings and reciprocal rank fusion to give AI coding assistants the context they need. Python served well for prototyping: FastAPI, SQLAlchemy, Pydantic, and a rich ecosystem of ML libraries made it straightforward to build and iterate.

But Python added friction. Deploying Kodit into the Helix ecosystem meant shipping a Python runtime, managing pip dependencies, and accepting the performance overhead of an interpreted language on a search-heavy workload. Since Helix is a Go project, it was obvious that Kodit should be in Go too. The goal was feature parity with the Python version, plus something new: a clean Go client API so that Helix and other projects could import Kodit as a library, not just call it as a server.

This article is the story of that migration. What changed architecturally, what broke along the way, and what the new Go version means for users. For the generic methodology behind AI-assisted cross-language migrations, see the companion article on Winder.AI.

The Migration Approach

The full methodology is covered in the Winder.AI article, but the short version is this: I set up a monorepo with the Python source and Go target side by side, wrote two design documents (CLAUDE.md for domain context and coding standards, MIGRATION.md for an ordered task checklist), and used Claude Code to generate the Go implementation in an automated loop.

What was specific to Kodit was the domain modelling.

Bounded contexts. Kodit has four distinct areas: repositories (the code sources being indexed), enrichments and snippets (the indexed content and its metadata), search (the query pipeline), and configuration. Each maps to a directory in the Go codebase with its own domain, application, and infrastructure layers.

Ubiquitous language. Terms like enrichment, association, snippet, and embedding model have precise meanings in the Kodit domain. These were documented in a glossary in CLAUDE.md so the AI would use them consistently rather than inventing its own terminology. Getting this right matters: when the AI starts calling an enrichment a “document” or a snippet a “chunk”, the generated code drifts from the existing schema and APIs.

Layered architecture. The Go codebase follows a DDD-inspired structure: domain types have no external dependencies, application services orchestrate use cases, and infrastructure implementations handle persistence and external APIs. Layer rules are enforced by Go’s package system. The domain package never imports infrastructure.

This structural discipline paid off during the automated generation phase. With clear boundaries, the AI could generate code for one context without accidentally coupling it to another.

Architectural Decisions

Several important design decisions were made during the migration. Some were intentional. Some were discovered by accident.

Public API vs Internal

The AI defaulted to placing everything in Go’s internal/ directory. This is idiomatic Go: internal/ prevents external projects from importing your packages. But the whole point of this migration was to make Kodit consumable as a Go library. I needed Helix to be able to import Kodit’s search client, repository types, and configuration directly.

I discovered this problem halfway through the migration. Everything compiled. Tests passed. But nothing was importable from outside the module. The refactor to extract a proper public API surface was substantial. It required deciding which types and interfaces belonged in the public package, which stayed internal, and how the public client would wrap the internal application services.

The result is a clean Go client that any project can import:

import "github.com/helixml/kodit/client"

c, err := client.New(client.Config{

BaseURL: "http://localhost:8080",

})

results, err := c.Search(ctx, client.SearchQuery{

Query: "authentication middleware",

Repository: "myorg/myrepo",

Limit: 10,

})

The lesson: define your public API surface before generating any code. If I had specified this in CLAUDE.md from the start, the AI would have structured the code around the public interface rather than burying everything in internal/.

The Snippets Resurrection

This was the standout domain failure of the migration.

In the early Python version, Kodit stored snippets in their own database table. Later, I consolidated the design: snippets became a type of unified enrichment, stored in the enrichments table with associations linking them to repositories and other enrichments. This simplified the schema to essentially two core tables: enrichments and associations. All content, whether a code snippet, a description, an embedding, or a repository reference, was an enrichment linked by associations.

But remnants of the old design remained in the Python codebase. Type hints referencing a Snippet model. Comments mentioning the snippets table. Variable names like snippet_results. The AI saw these, recognised that “snippet” was a core domain concept (it was in the ubiquitous language glossary, after all), and rebuilt the entire deprecated table and data access layer.

I only discovered the problem when I ran a migration test: importing real data from a running Python instance into the new Go version. The data migrated successfully (enrichments landed in the enrichments table), but searches returned zero results. The Go search pipeline was querying the snippets table, which was empty.

The fix was another refactor. “Snippet” touched nearly every layer of the codebase: domain types, repository interfaces, application services, API handlers, database queries. Every reference had to be redirected to the enrichments table and its association-based data model.

The lesson is twofold. First, clean up dead references before migration. If deprecated code exists anywhere in the source, the AI will find it and use it. Second, migration tests are essential. Smoke tests with fresh data are not sufficient. You need to test with real data from the previous version to catch schema-level regressions.

Configuration Scattering

The AI scattered configuration defaults and overrides across multiple files. A default embedding model in one package. An overridden batch size in another. Environment variable reads in a third. The Go version had no single place where you could see what the system’s configuration was, what the defaults were, or where values were being mutated.

The principle I enforced during refactoring: configuration should be set, defaulted, logged, validated, and mutated in exactly one place. In the Go version, this is the config package. Application services receive their configuration at construction time and never read environment variables or apply defaults themselves.

In-Memory Pagination

The AI initially created list endpoints that loaded all records from the database and paginated in memory. An obvious and stupid error.

I caught this during code review and required proper LIMIT/OFFSET queries flowing from the API layer through the application service into the database query. The pagination parameters are defined at the API boundary and propagated down to the DB.

The broader pattern here is that AI-generated code tends to take the path of least resistance. Loading everything and slicing in Go is simpler to write because the infrastructure is already there. Doing it the right way, threading pagination parameters through three layers, touches a lot of code. If you care about performance at scale, you need to specify these constraints in the design.

Testing and Validation

Building confidence in the new version required multiple layers of testing. No single strategy was sufficient on its own.

Unit Tests

These tests are fast and catch regressions in individual components, but they said nothing about whether the system worked end-to-end. In the first version I focussed more on representative, real life end-to-end and smoke tests.

Smoke Tests

I created a pair of smoke test suites: one targeting the Python version, one targeting the Go version, both executing the same sequence of operations. Index a repository. Create enrichments. Run a search. Compare results.

These smoke tests caught wiring issues that unit tests could not: missing middleware, incorrect route registrations, serialisation differences between FastAPI and Go’s HTTP handlers.

After creating a Python-era postgres dump, I wrote a new smoke test to ingest this and test other end-to-end workflows.

API Parity via OpenAPI

A test that compares the OpenAPI specification generated by the Go version against the Python version. This caught missing endpoints, wrong parameter types, incorrect response schemas, and structural differences that would break existing clients.

If you are migrating a web API, this test is essential. It provides a machine-readable contract between the old and new implementations.

Ranking Comparison

The most revealing test was a direct side-by-side comparison of search results. I ran the same queries against both versions and compared the ranked output.

The results were initially wrong. Completely wrong. The investigation uncovered multiple issues:

Truncation error. When converting embeddings to VectorChord’s database format, the Go version was incorrectly truncating the float arrays. Dimensions were being lost.

RRF indexing error. The reciprocal rank fusion implementation had an off-by-one error when combining BM25 and semantic rankings.

Wrong embedding read. The AI had added unrequested functionality to read multiple embedding formats from disk. This caused it to load the wrong embedding for a given snippet, producing nonsensical similarity scores.

Each of these passed unit tests in isolation. Only the end-to-end ranking comparison revealed the compounding effect.

There was a silver lining. During this debugging, Claude noticed that the codebase was using L2 (Euclidean) distance rather than cosine distance for vector similarity. This was likely degrading results in the Python version too. A genuine improvement discovered by accident.

Migration Test

Testing with real data migrated from the old Python database to the new Go schema. This is what caught the snippets table regression described above. If you are rewriting a system that has existing production data, migration tests are non-negotiable. They test the one thing smoke tests cannot: whether the new system correctly handles legacy data.

What the AI Got Wrong

To be specific about where the AI failed on this project:

Resurrecting deprecated features. The snippets table rebuild was the most expensive failure. The AI saw domain references, inferred importance, and recreated dead functionality. The fix touched dozens of files.

Dead code accumulation. After refactoring from internal/ to a public API, orphaned packages remained. They appeared used because other orphaned packages imported them. Identifying dead code required understanding the full dependency graph, which the AI could not do unprompted.

Excessive functionality. The AI added features not present in the Python version: multiple embedding format readers, alternative search strategies, extra configuration options. Each addition introduced potential bugs with zero user value.

Missing end-to-end wiring. Individual components worked. The application as a whole did not start correctly the first time. The AI generated each piece but never ran the server. Wiring errors (missing dependency injection, incorrect initialisation order) only appeared when the full system was assembled.

The New Kodit

What users and integrators get from the Go version:

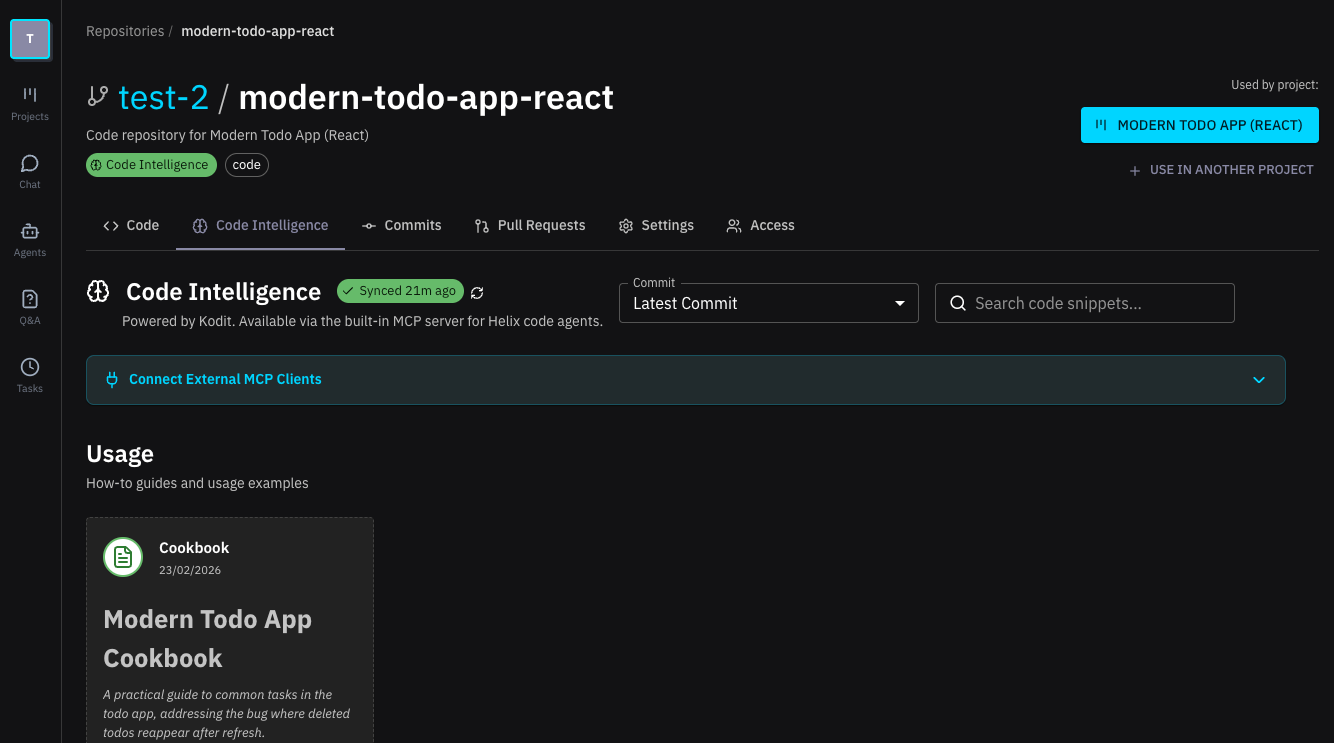

Go client library. Import github.com/helixml/kodit/client and use Kodit programmatically. Search, index repositories, manage enrichments, all through typed Go functions. This is the foundation for the Helix integration.

Same interfaces. The MCP server and CLI behave identically to the Python version. Existing users should see no difference in their workflow.

Database compatibility. SQLite for local-first usage. VectorChord/PostgreSQL for enterprise scale. The Go version supports both, matching the Python version’s flexibility.

Performance. The Go version benefits from compiled execution and Go’s concurrency model for parallel indexing and search. Formal benchmarks are forthcoming, but my initial testing is reporting a 5x performance improvement during testing was noticeably faster for large repositories.

What’s Next

The migration itself inspired new functionality. The dead code and orphaned package problems I encountered manually are exactly the kind of issues Kodit should detect automatically. Dead code detection and duplication analysis are on the roadmap. I also want to get back to benchmarking and indexing improvements.

The Helix integration is underway, with Kodit’s Go client providing native code search within the Helix platform. Community contributions are welcome, particularly around new enrichment strategies and search pipeline improvements.

The Kodit repository is open source. Issues, discussions, and pull requests are the best way to get involved.

Conclusion

The rewrite was worth it. The Go version is cleaner, faster to deploy, and designed for library consumption from the start. The AI-assisted approach compressed what would have been months of manual translation into about three weeks, but it required constant human oversight of architecture and domain correctness.

The biggest lesson is this: AI coding assistants are powerful translators but poor architects. They will faithfully convert Python patterns to Go patterns, function by function, file by file. But they cannot see the system as a whole. They cannot question whether a deprecated table should be rebuilt. They cannot decide which packages should be public. They cannot judge whether in-memory pagination is acceptable at scale.