Why Benchmarking AI Code Tools Is Harder Than You Think

Standard AI benchmarks are not fit for purpose. Here's what you need to know.

AI coding assistants are everywhere. Claude Code, Codex, Cursor, etc. Everyone wants to know which is “best”. You’ll find an infinite array of opinions and a thousand AI-generated “hot takes” that are neither hot and only take (the piss).

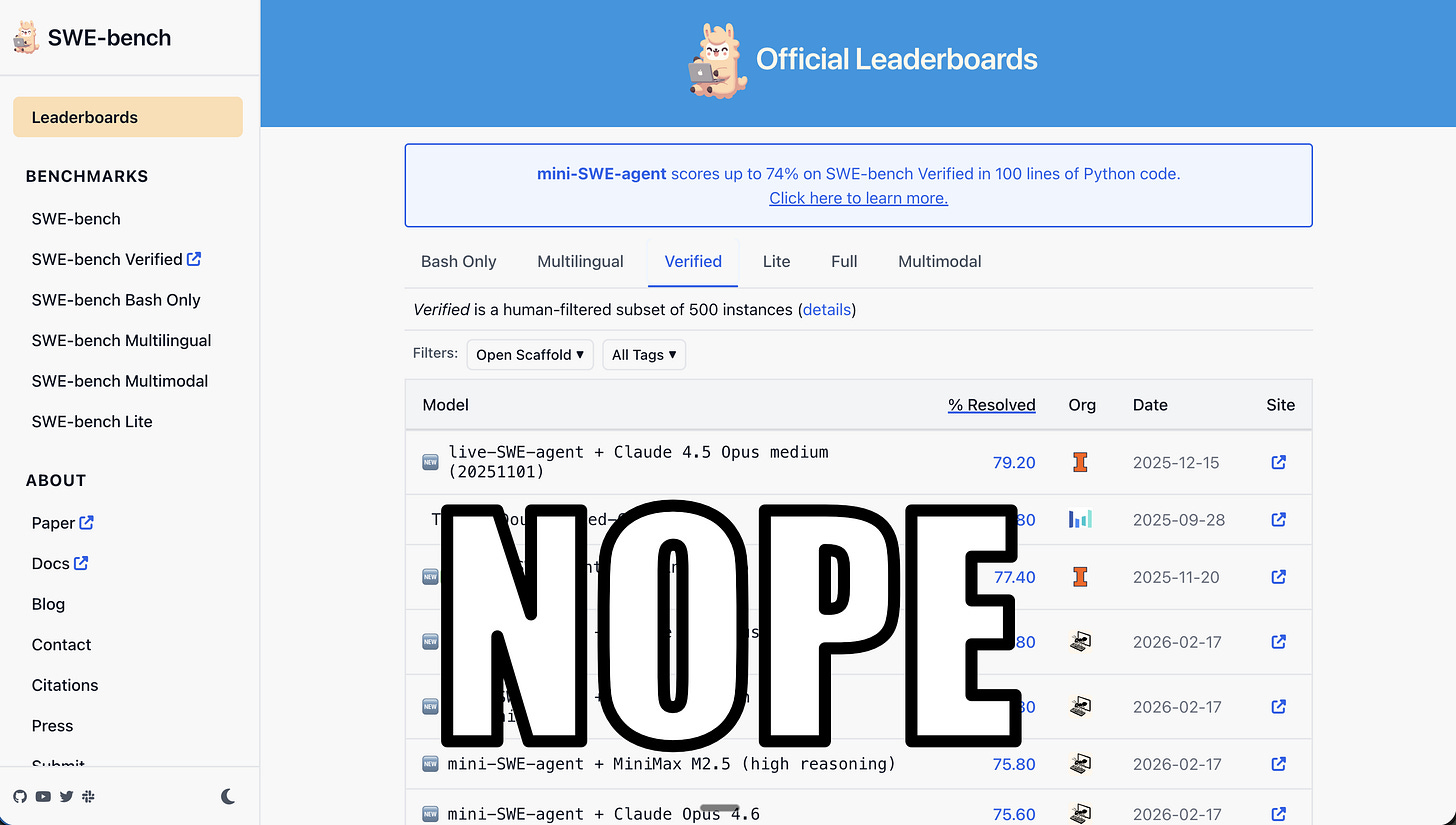

The natural instinct is to look at leaderboards. Some poor soul, somewhere, has taken the time to attempt to robustly benchmark these tools. I sincerely thank them for the effort because I appreciate how hard it is to do this well.

The Problem With Traditional Benchmarks

In general, the benchmarks are created under two high-level remits. The first is an academic exercise to find the state of the art. Academics then use these results to guide their research. This is a good thing and they should continue to do that. But these benchmarks are not representative of real-world scenarios. The second is a task-specific exercise where model or algorithm developers attempt to produce directional metrics that correlate with downstream performance. Again, as a long-term ML and AI practitioner, I appreciate the need to simplify problems to metrics that we can directly optimise for. But, again, these benchmarks are not representative of real-world scenarios.

All RAG benchmarks are based upon one-shot retrieval tasks, evaluated in isolation for retrieval accuracy. Nearly all coding benchmarks are based upon one-shot patch generation tasks, evaluated in isolation for patch correctness.

This isn’t how AI coding agents actually work.

Coding assistants work through trial and error. Much like in reinforcement learning, they explore their environment and anticipate the goals of the developer. They often make mistakes. These are sometimes caught by automated analysis (e.g. linting, tests, etc.). Sometimes they are not and need to be manually corrected. We can include base knowledge (e.g. CLAUDE.md) or external knowledge (e.g. a web search) to help the agent. All of these permutations aren’t tested, all of the time, by any of the benchmarks. This is a problem.

Modern Coding Benchmarks

HumanEval and SWE-bench are the two most popular coding benchmarks that are touted by every vendor.

HumanEval is probably the worst. Created by OpenAI in 2021, it consists of a function signature and a docstring describing what the function should do. It also contains a hidden set of unit tests that evaluate the correctness of the function. Ignoring the fact that these examples are now in every model’s training data, the main issue is that it’s a one-shot generation test. It’s the same as any QA challenge originally conceived way back in 2002.

Aside: this led to the best-named metric on the market, SacreBLEU, which is independent of tokenisation.

In 2023, Princeton researchers (subsequently OpenAI) released SWE-bench. It represented an important step up from HumanEval by drawing real-life examples from real pull requests. Each instance is codified as the commit just prior to the fix of the issue. The agent is given the issue description and access to the repository at that point in time. They have test cases again to test the correctness of the patch. For reference, initial basic one-shot RAG approaches achieved just 2% success. (Granted, this was Claude 2 and BM25 at the time...)

You’d think that would be the end of the story, because this almost represents what agents are doing in real life. But no.

The first problem is that OpenAI found that a whopping 59% of tests in a sample have “flawed” test cases that reject functionally correct patches. They also note that more recent models have (in)advertently learned to overfit the benchmarks, predicting the correct patch irrespective of the prompt, akin to Volkswagen changing emission profiles when it detected it was being tested.

Given a short snippet from the task description, GPT‑5.2 outputs the exact gold patch. In particular, it knows the exact class and method name, and the new early return condition

if username is None or password is Nonethat is introduced.

The second problem, and this is less relevant to model developers like OpenAI, is that Kodit allows the coding assistant to search for relevant work from other external resources and codebases. Kodit is not restricted to only searching the codebase under test. It can learn from others. This is a critical advancement in the enterprise domain where developers are often working across multiple codebases at the same time. An authentication implementation in one repo is likely very useful for another.

A final problem I have with nearly all benchmarks is that they are self-contained. In my experience, most coding tasks involve another library, framework, or system. None of these benchmarks ever say “add a new table to my SQLAlchemy application”, or “update the frontend to show the information in the new API”. They’re always leet-code style “implement quicksort” tasks; self-contained, using the base language only. And they’re often only in Python!

Why Kodit is Hard to Benchmark

Kodit is a multi-turn, multi-tool, multi-context assistant to a coding assistant. It’s hard enough to say, let alone benchmark! Kodit indexes external codebases to provide relevant context to any coding task. Exposed as an MCP server, it can be used by any coding assistant that supports the MCP protocol. In addition, it generates enrichments meant more for human consumption to help explain the inner workings of a codebase.

Given this flexibility, traditional information retrieval metrics don’t capture whether the context actually improved the solution. Success is measured downstream of Kodit, at the end of the coding task. So the question isn’t “did it find the right snippet” but instead should be “did this snippet lead to better code.” This means you need an end-to-end evaluation, more like SWE-bench, but with global context.

The next problem is one I’ve observed. I have seen situations where I know a quick Kodit lookup would help the assistant, but the coding assistant decided not to. It chose to search the web instead. Or worse, it just started writing code. In most cases I have to hack around this by telling the agent, in no uncertain terms, to use Kodit. Threats work well. But it’s tedious. Equally, I’ve seen coding assistants search for the wrong thing and go down a wasteful path.

So in the end, the “performance” of Kodit is often less about what it is able to do, but more about how well the agent can use it.

This realisation has led me to an important conclusion that I need to make Kodit simpler, more focussed, less smart. I am now actively working on simplifying the MCP interface and the internal search implementation.

What Does a Good Benchmark Look Like?

I am using SWE-bench verified to test and evaluate Kodit. Using the canonical SWE-bench coding agent, mini-swe-agent, I created a wrapper that adds Kodit as an attached MCP server and compared it against an agent without Kodit. And a script that indexes the commit under test (so the agent can’t just search for the correct answer in a subsequent commit). And it works; I’ll leave the actual metrics for another day. But it’s more like an end-to-end test than an evaluation. The agent can’t take advantage of Kodit’s key selling point: leveraging information from other codebases.

If anyone fancies a bit of light torture and wants to implement a benchmark themselves, then a good one would look like this:

End-to-end measurement of final code quality. Both functionally and non-functionally.

Multi-turn aware. Captures and evaluates the full agent trajectory, not just the final patch.

Able to compare with and without external context augmentation.

Accounts for cost or the number of tokens used.

Has realistic challenges. Not just bug fixes, but new features, framework and language migrations, version upgrades, integration with external systems, usage of popular external libraries, etc.

All the languages, not just Python!

Resistant to contamination. Uses private or freshly-created repos the model hasn’t seen.

Why Now

AI coding tools have now moved on from auto-complete. We seem to have skipped merrily through auto-assist and are already smack bang in the middle of auto-management. But we have no way to know how well these tools perform.

For Kodit, it’s hard for me to explain to my users by how much Kodit improves the coding assistant. Through experience I know it’s positive. Via demos I can see it working where it failed before. But it’s still incredibly hard to quantify.

But I’m actively working on this. Future posts will share more concrete results and learnings. For now, the main point is: be wary of the leaderboards and the opinions.